Chapter 2

Ordinary Differential Equations

2.1 Reduction to first-order

An ordinary

differential equation involves just one

independent variable,

, and a

dependent variable

. Obviously it involves

derivatives of the

dependent variable like

. The highest

order

differential, i.e. the term

with the largest value

of

appearing in the equation, defines the

order of the

equation. So the most general ODE of order

can be written such

that the

th order derivative is equal to a function of all the

lower order derivatives and the independent variable

:

|

|

|

Such an ordinary differential equation of order

in a single dependent

variable,

, can always be reduced to a set of simultaneous

coupled

first order equations involving

dependent variables.

The simplest way to do this is to use a natural notation to denote by

the

th derivative:

|

|

|

When combined with the original equation, the total system can be

written as a first-order vector

differential

equation whose components are

|

|

|

(where for notational consistency

). Explicitly in vector form:

|

|

|

Recognizing that the combined simultanous vector system of dimension

with first-order derivatives, is equivalent to a single scalar equation

of order

, we often say that the order

of the coupled vector system is

still

. (Sorry if that seems confusing. In practice you get the

hang of it.)

This is formal mathematics and applies to all equations, but precisely

such a set of coupled first-order equations will often also arise

directly in the formulation of the practical problem we are trying to

solve

. Suppose

we are trying to track the position of a fluid

element in a three

dimensional steady flow

. If we know the fluid velocity

as a function of position

, then the equation of the

track of a fluid element, i.e. the path followed by the element as it

moves in time

, is

This is the equation we must solve to find a fluid

streamline

. It is of just the same form we derived

by order reduction. Such a history of position as a function of time

is called generically an orbit

. Here the independent

variable is

, and the dependent variable is

. The vector

plays the role of the functions

.

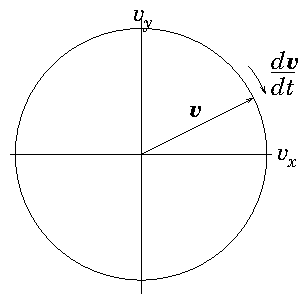

Orbits may not be just in space, they may be in

higher-dimensional

phase-space. For example

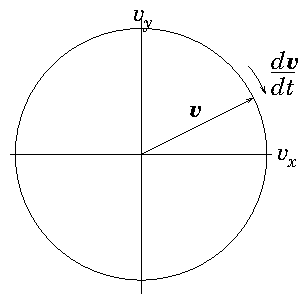

(see Fig

2.1) to

find the orbit of a charged particle

(e.g. proton) of mass

and charge

moving at velocity

in a

uniform magnetic field

, we observe

that it is subject to a force

. In the absence

of any other forces, it has an equation of motion

|

|

|

in which the acceleration depends upon the velocity. This is a first

order vector differential equation, in three dimensions, where

plays the role of the independent variable, and the dependent variable

is the vector velocity

. The vector

acceleration

which is the vector

derivative function depends upon all the components of

.

Figure 2.1: Orbit of the velocity of a particle moving in a uniform

magnetic field is a circle in

velocity-space perpendicular

to the field (

here).

If, for our proton orbit,

is not uniform, but varies with

position, then we need to know both position

, and velocity

at all times along the orbit to solve it. The system then

involves six-dimensional vectors consisting of the components of

and

:

|

|

|

Very often, to find

analytic solutions it feels more natural to

eliminate some of the dependent variables with the result that the

order of the ODE is raised. So, for example for a uniform magnetic

field in the

-direction, the dynamics perpendicular to it separate

out into

|

|

|

(writing

)

. The second-order

uncoupled equations are familiar to us as simple harmonic

oscillator

equations, having

solutions like

and

. So they are easier

to solve

analytically. But the original first-order equations,

even though they are

coupled are far easier to solve

numerically. So we don't generally do the

elimination

in computational solutions.

2.2 Numerical Integration of Initial Value Problem

2.2.1 Explicit Integration

Now we consider how in practice to solve a first-order ODE

in which all the boundary conditions

are

imposed at the same position in the independent variable. Such

boundary conditions constitute what is called an "initial value

problem"

. We start

integrating

forward in the independent variable

(e.g. time or space) from a place where the initial values are

specified. To simplify the discussion we will consider a single

(scalar) dependent variable

, but note that the generalization to a

vector of dependent variables is usually immediate, so the treatment

is fine for higher order equations that have been reduced to vectorial

first-order form.

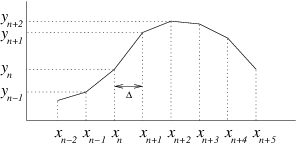

In general, numerical solution of differential equations requires us

to represent the solution, which is usually continuous, in a

discrete

manner where the values are given at a series of points rather than

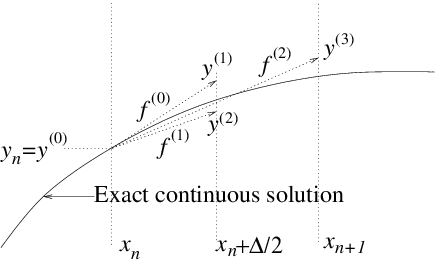

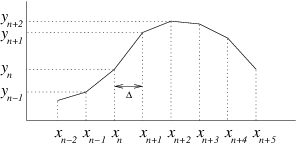

continuously. See Fig.

2.2.

Figure 2.2: Illustrating finite difference representation of a

continuous function.

The natural way to discretize the

derivative

is to write

|

|

|

where the index

denotes the value at the

th

discrete step, and therefore

|

|

|

This equation tells us how

changes from one step to the

next. Starting from an initial position we can step discretely as far

as we like, choosing the size of the independent variable step

appropriately.

A question that arises, though, is what to use for

and

inside

the derivative function

. The

value can be chosen more or

less at will

but before we've actually made the step, we don't know where we are

going to end up in

, so we can't easily decide where in

to

evaluate

. The easiest answer, but not generally the best, is to

recognize that at any point in stepping from

to

along the

orbit, we already have the value

. So we could just use

. This choice is said to be

"explicit"

, and is sometimes called the Euler

method

. The reason why this method is not the best

is because it tends have poor

accuracy and poor

stability.

2.2.2 Accuracy and Runge-Kutta Schemes

To illustrate the problem of accuracy, consider

the derivative function

expanded as a

Taylor

series about the

position, writing

,

. The derivative function

is a

function of both

and

. However, the solution for the orbit can

be written

. Therefore the function evaluated on the orbit,

, is a function only of

, and we can write its (total)

derivative as

.

The Taylor expansion of this function is simply

|

|

|

We use the notation

(etc.) to indicate values evaluated at

position

.

If we substitute this Taylor expansion for

into the differential

equation we are trying to solve,

,

and integrate term by term we

get the

exact solution of the differential equation.

|

|

|

We subtract from it whatever the finite difference

approximate

equation is. In the case of

(

2.10) it is

, and we find that the error

in

is

|

|

|

This tells us that the explicit Euler difference scheme is accurate

only to

first-order in

the size of the step

(when the first derivative of

is

non-zero) because an error of order

is present. That

means if we make the step a factor of 2 smaller, the cumulative error,

when integrating over a set total distance, gets smaller by

(approximately) a factor of 2. (Because each step's error is 4 times

smaller, but there are twice as many steps.) That's not very good. We

would have to take very small steps,

, to get good accuracy.

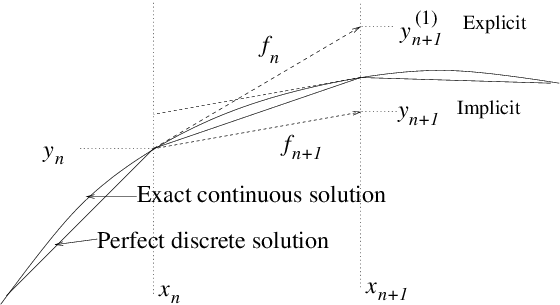

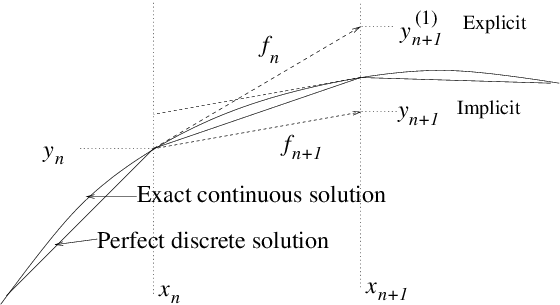

Figure 2.3: Optional steps using derivative function evaluated at

or .

We can do better. Our error arose because we approximated the

derivative function by using its value only at

. But once we've

moved to the next position, and know (with some inaccuracy) the value

and hence

there, we can evaluate

better the

we should have used. This process is illustrated in Fig.

2.3. In fact, by substitution from eq. (

2.11) it is easy to see that if we use, instead, the advancing

equation

|

|

|

where

is the value of

obtained at the end

of our first (explicit Euler) advance

,

then we would obtain for our

approximate advancing scheme

|

|

|

which now agrees with the

first two terms of the full exact

expansion (

2.12), and whose error, in comparison with that

expression, is now of

third order, rather than second. This

second-order

accurate scheme gives cumulative errors

proportional to

and so converges much more quickly as we

shorten the step length.

The reason we obtained a more accurate step was that we used a more

accurate value for the average (over the step) of the derivative

function. It is straightforward to improve the average even more, so

as to obtain even higher order accuracy. But to do that

requires us to obtain estimates of the derivative function part

way through the step as well as at the ends. That's because we need to

estimate the first and second derivatives of

.

A Runge-Kutta method consists of taking a series of steps, each one of

which uses the estimate of the derivative function obtained from the

previous one, and then taking some weighted average of their

derivatives.

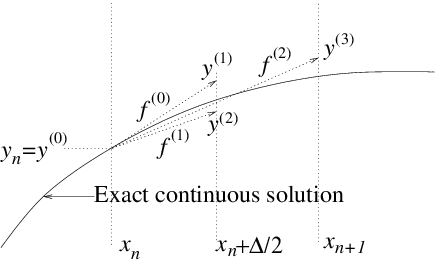

Figure 2.4: Runge-Kutta fourth-order scheme with four partial steps,

evaluates the derivative function

with

, at

four places

, each determined by extrapolation along

the previous derivative.

Specifically, the

fourth-order (accurate)

Runge-Kutta scheme, which is by far the most

popular and is illustrated in Fig.

2.4, uses:

|

|

in which two steps are to the half-way point

.

Then the following combination

|

|

|

gives an approximation accurate to fourth-order

The Runge-Kutta method costs more computation per step, because it

requires four evaluations of the function

, rather than just

one. But that is often more than compensated by the ability to take

larger steps than with the Euler method for the same accuracy.

2.2.3 Stability

The second, and possibly more important, weakness of explicit

integration is in respect of stability. Consider a linear differential

equation

where

is a positive constant.

This of course has the solution

. But suppose

we integrate it numerically using the explicit scheme

|

|

|

This finite difference equation

has the solution

|

|

|

as may be verified by the simple observation that

. (This ratio is called the amplification

factor

.) If

is a small number,

then no difficulties will arise, and our scheme will produce

approximately correct results. However, a choice

compromises not only accuracy, but also

stability. The

resulting solution has alternating sign; it oscillates; but also its

magnitude

increases with

and will tend to infinity at large

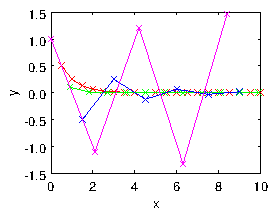

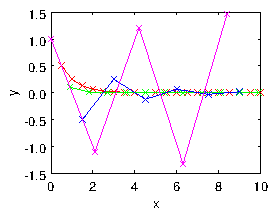

. It has become unstable, as illustrated in Fig.

2.5.

Figure 2.5: Explicit numerical integration of eq. (

2.18), using eq. (

2.19) leads to an oscillatory instability if the step

length is too long. Four step lengths are shown

.

In general, an explicit discrete advancing scheme requires the step in

the independent variable to be less than some value (in this case

) in order to achieve stability.

An

implicit advancing scheme, by contrast, is one in which the

value of the derivative used to advance the variable is taken at the

end of the step rather than at the

beginning. For our

example equation, this would be a numerical scheme of the form

|

|

|

It is easy to rearrange this equation into

This has solution

|

|

|

For positive

(the case of interest) this finite difference

equation

never becomes unstable, no matter how big

is, because the solution consists of successive powers of an

amplification factor

whose magnitude is always less

than 1. This is a characteristic of implicit

schemes

. They are generally stable even for

large steps

.

2.3 Multidimensional Stiff Equations: Implicit Schemes

The question of stability for an order-one system (the scalar problem)

is generally not very interesting; because instability of the explicit

scheme occurs only when the step size is longer than the

characteristic spatial scale of the problem

. If you've

chosen your step size so large, you are already failing to get an

accurate solution of the equation. However, multidimensional (i.e. higher order) sets of (vector) equations may have multiple solutions

that have very different scale-lengths in the independent variable. A

classic example is an order-two homogeneous linear system with constant

coefficients

|

|

|

For any such linear system the solution can be constructed by

consideration of the

eigenvalues of the

matrix

: those numbers for which there exists a solution to

. If these are

and the

corresponding eigenvectors

are

, then

are solutions to the

equation. The complete solution can be constructed as a sum of these

different characteristic solutions, weighted by coefficients to

satisfy the initial conditions. The point of our particular example

matrix is that its eignvalues are -100 and -1. Consequently in order

to integrate numerically a solution that has a significant quantity of

the second, slowly changing, solution

, it is necessary

nevertheless to ensure the stability of the first, rapidly changing,

solution,

. Otherwise, if the first solutions is

unstable, no matter how little of that solution we start with, it will

eventually grow exponentially large and erroneously dominate our

result. If an explicit advancing scheme is used, then stability

requires

as well as

, and

the

condition is by far the most restrictive. There are then at

least

steps during the decay of the

(

) solution of interest. Because this ratio is large, an

explicit scheme is computationally expensive, requiring many steps. In

general, the

stiffness of a differential

equation system can be measured by the ratio of the largest to the

smallest eigenvalue magnitude. If this ratio is large, the system is

stiff, and that means it is hard to integrate explicitly.

Using an implicit scheme avoids the necessity for taking very small

steps. It does so at the cost of solving the matrix problem

. This requires the

inversion

of a matrix in order to evaluate

|

|

|

For a linear problem like the one we are considering, the single

inversion, done once and for all, is a relatively small cost compared

with the gain obtained by being able to take decent length steps.

All of this probably seems rather elaborate for a linear, constant

coefficient, system, since we are actually able to solve it

analytically when we know the eigenvalues and eigenvectors. However,

it becomes much more significant when we realize that the stability of

a

nonlinear system, or one in which

the coefficients vary with

or

, for which numerical integration

may be essential, is generally very well described by expressing it

approximately as a linear system

in the

neighborhood of the region under consideration, and then evaluating

the stability of the linearized system. The matrix that arises from

linearization when the (vectorial) derivative function is

has the elements

. An implicit solution then requires

to

be inverted for every step, because it is changing with position (if

the derivative function is nonlinear).

In short, implicit schemes lead to greater stability, which is very

important with stiff systems, but require matrix inversion.

2.4 Leap-Frog Schemes

Codes such as

particle-in-cell

simulations of

plasmas, atomistic simulation

, or any

codes that do large amount of orbit

integration

, generally want to use as cheap a

scheme as possible to maintain their speed. The step size is often

dictated by questions other than the accuracy of the orbit

integration. In such situations, Runge-Kutta or implicit schemes are

rarely used. Instead, an accurate orbit integration can be obtained by

recognizing that Newton's second law of motion (acceleration

proportional to force) is a second-order vector equation that can most

conveniently be split into two first-order vector equations involving

position, velocity, and acceleration. The velocity we want for the

equation governing the evolution of position,

,

is the average velocity during the motion between two positions. An

unbiassed estimate of that velocity is to take it to be the velocity

at the

center of the position step. So if the times at which we

evaluate the position

of the particle are denoted

,

then we want the velocity at time

. We might call

this time

and the velocity

. Also, the acceleration we want for the equation for

the evolution of velocity,

, is the average

acceleration between two velocities. If the velocities are represented

at

and

, then the time at which we want the

acceleration is

, and we might call that acceleration

.

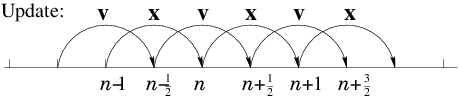

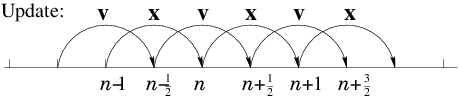

We can therefore construct an appropriate centered

integration scheme as follows starting from position

.

| (1) | Move the particles using

their velocities to find the new positions

; this is called

the drift step. |

| (2) | Accelerate the velocities using the accelerations

evaluated at the new positions to

obtain the new velocities

;

this is called the kick step. |

| (3) | Repeat (1) and (2) for the next time

step , and so on.

|

Each of the drift and kick steps can be simple

explicit advances. But because the velocities are suitably

time-shifted relative to the positions and accelerations, the result

is

second-order accurate. Such a scheme is called a Leap-Frog

scheme, in reference to the children's game where each of two players

alternately jumps over the back of the other in moving to the new

position. Velocity and position are jumping over one another in time;

they never land at the same time (or place). See Fig.

2.6.

Figure 2.6: Staggered values for

and

are updated leap-frog

style.

One trap for the unwary in a Leap-Frog scheme is the specification of

initial values

. If we want to

calculate an orbit which has specified initial position

and velocity

at time

, then it is not sufficient

simply to put the velocity initially equal to the specified

. That is because the first velocity, which governs the

position step from

to

is

not , but

. To start the integration off correctly, therefore, we

must take a

half-step in velocity by putting

, before beginning the standard

integration.

Leap-Frog schemes generally possess important conservation properties

such as conservation of momentum

, that

can be essential for realistic simulation with a large number of

particles.

Worked Example: Stability of finite differences

Find the stability limits when solving by discrete finite differences of length

, using explicit or implicit schemes, the initial value equation

|

|

|

where

is a positive constant

.

First, reduce the equation to standard first-order form by

writing

|

|

|

Now, notice that, in either form, this equation has a sort of driving

term

independent of

. It can be removed from the stability

analysis by considering a new variable

which is the difference

between the finite difference numerical solution that we are trying to

analyse,

, and the exact solution of the differential

equation,

. Thus

. The quantity

satisfies a

discretized form of the

homogeneous version of eq. (

2.26); with the term

removed. Consequently analysing that homogeneous equation tells us

whether the difference

between the numerical and the exact

solutions is stable or not. That is what we really want to know. For

stability, we pay no attention to

. The resulting homogeneous

equation is of exactly the same form as eq. (

2.24):

. Each independent solution for

is thus an eigenvector

of the matrix

such

that

. The

eigenvalue

decides its stability. An

explicit (Euler)

scheme of step length

will result in a step amplification factor

, such that

with

, and will be

unstable if

.

The eigenvalues of

satisfy

|

|

|

with solutions

These solutions are complex if

, in which case,

|

|

|

is greater than unity unless

, which is the

stability criterion of the Euler scheme. If

, so that the

homogeneous equation is an undamped harmonic oscillator, the explicit

scheme is unstable for any

. A fully implicit scheme, by contrast, has

, for which

, always less than one. The implicit

scheme is always stable.

Exercise 2. Integrating Ordinary Differential Equations.

1. Reduce the following higher-order ordinary differential equations

to first-order vector differential equations, which you should write

out in vector format.

(a)

(b)

(c)

2. Accuracy order of ODE schemes. For notational convenience, we start

at

and consider a small step in

and

of the ODE

. The Taylor expansion of the derivative function along

the orbit is

|

|

|

| (a) | Integrate term by term to find the solution for to

third-order in . |

| (b) | Suppose . Find to second-order in . |

| (c) | Now consider show that it is equal to

plus a term that is third-order in . |

| (d) | Hence find to second-order in . |

| (e) | Finally show that is equal to

accurate to second-order in . |

[Comment. The third-order term in part (c) involves the

partial derivative

rather than the derivative

along the orbit. Proving rigorously that the fourth-order Runge Kutta

scheme really is fourth-order, is rather difficult because it requires

keeping track of such partial derivatives.]

Programming Exercise

Write a program to integrate numerically from

to

the ODE

with

a positive constant, starting from

, proceeding

as follows.

(a) Use the explicit Euler scheme

(b) Use the implicit scheme

In each case, find numerically the

fractional error at

for the following choices of timestep.

(i)

(ii)

(iii)

(c) Find experimentally the timestep value at which the explicit

scheme becomes unstable. Verify that the implicit scheme never becomes

unstable.

One trap for the unwary in a Leap-Frog scheme is the specification of

initial values. If we want to

calculate an orbit which has specified initial position

and velocity at time , then it is not sufficient

simply to put the velocity initially equal to the specified

. That is because the first velocity, which governs the

position step from to is not , but

. To start the integration off correctly, therefore, we

must take a half-step in velocity by putting , before beginning the standard

integration.

Leap-Frog schemes generally possess important conservation properties

such as conservation of momentum, that

can be essential for realistic simulation with a large number of

particles.

One trap for the unwary in a Leap-Frog scheme is the specification of

initial values. If we want to

calculate an orbit which has specified initial position

and velocity at time , then it is not sufficient

simply to put the velocity initially equal to the specified

. That is because the first velocity, which governs the

position step from to is not , but

. To start the integration off correctly, therefore, we

must take a half-step in velocity by putting , before beginning the standard

integration.

Leap-Frog schemes generally possess important conservation properties

such as conservation of momentum, that

can be essential for realistic simulation with a large number of

particles.